Checkmarx has published its Seven Steps to Safely Use Generative AI in Application Security report, which analyzes key concerns, usage patterns and buying behaviors relating to the use of AI in enterprise application development. The global study exposed the tension between the need to empower both development and application security (AppSec) teams with the productivity benefits of AI tools and the need to establish governance to mitigate emerging risks.

“Enterprise CISOs are grappling with the need to understand and manage new risks around generative AI without stifling innovation and becoming roadblocks within their organizations,” said Sandeep Johri, CEO at Checkmarx. “GenAI can help time-pressured development teams scale to produce more code more quickly, but emerging problems such as AI hallucinations usher in a new era of risk that can be hard to quantify. Checkmarx has successfully foreseen the problems that can arise with AI-generated code and we’re proud to be delivering next-stage solutions within the Checkmarx One platform today.”

Highlights of the global AI study include these findings showing the difficulty of establishing and enforcing governance:

- Only 29% of organizations have established any form of governance

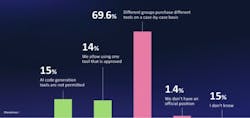

- 15% of respondents have explicitly prohibited the use of AI tools for code generation within their organizations

- 99% report that AI code-generation tools are being used regardless of prohibitions

- 70% say there is no centralized strategy for generative AI, with purchasing decisions made on an ad-hoc basis by individual departments

- 60% are worried about GenAI attacks such as AI hallucinations

- 80% are worried about security threats stemming from developers using AI

Many CISOs are seeking to build the right levels and types of governance in order to permit their application development teams to use AI coding tools. Given its ease of adoption, flexibility and utility, security leaders clearly understand its potential for helping to speed and scale application development in a time-pressured business environment.

However, generative AI is currently unable to follow secure coding practices or to produce truly secure code, which motivates some security teams to consider AI-driven security tools to help manage the proliferation of development teams’ AI-generated code. The Checkmarx study found that:

- 47% of respondents indicated interest in allowing AI to make unsupervised changes to code

- 6% said that they wouldn’t trust AI to be involved in security actions within their vendor tools

“The responses of these global CISOs expose the reality that developers are using AI for application development even though it can’t reliably create secure code, which means that security teams are being hit with a flood of new, vulnerable code to manage,” said Kobi Tzruya, Chief Product Officer at Checkmarx. “This illustrates the need for security teams to have their own productivity tools to manage, correlate and help them prioritize vulnerabilities, as Checkmarx One is designed to help them do.”

Methodology

In early 2024 Checkmarx commissioned a global research firm to conduct a survey of 900 CISOs and application security professionals in companies in North America, Europe and Asia-Pacific with annual revenue of $750 million or more.

To review the report and learn the seven steps to safely use generative AI in application development, visit this page.